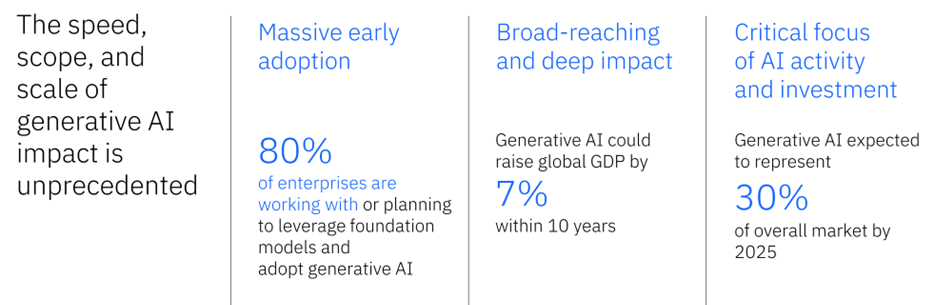

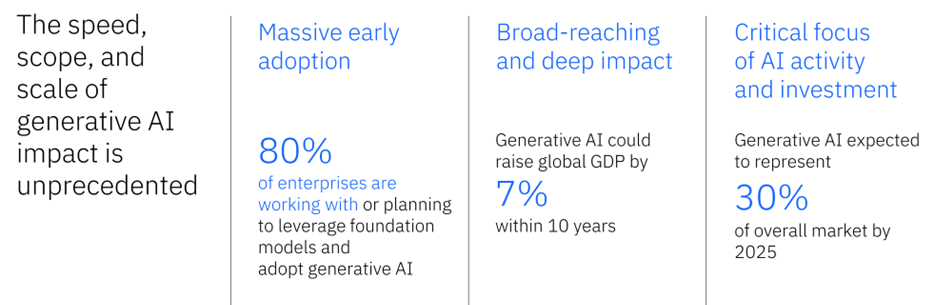

The demand for generative AI has experienced a significant increase in recent years, highlighting its potential to bring about transformation in various industries. This widespread adoption, however, presents considerable challenges, such as ethical concerns, vulnerabilities in security, and the requirement for substantial computational resources. As businesses aim to harness the potential of generative AI, they must navigate the complexities in order to maximise the benefits while minimisng the potential drawbacks.

Public Spectrum has caught up with Kieran Hagan, the Data and AI Segment Leader at IBM Software Group, who has extensive experience in addressing these important issues. Hagan’s extensive experience in the field allows organisations to benefit from his wealth of knowledge on effectively implementing generative AI technologies. His viewpoints are especially pertinent as companies strive to find a balance between innovation and accountability.

Hagan’s credentials are exceptional, showcasing his expertise in the industry. He has led multiple AI initiatives at IBM, making significant contributions to the progress of machine learning and data analytics. His role entails assisting businesses in navigating the complexities of AI implementation, helping them utilise state-of-the-art solutions while tackling important ethical and operational issues.

Kieran Hagan discusses the significance of navigating generative AI’s complex challenges.

1. What is the role of generative AI in cloud and digital transformation strategies?

This is the new workload demand for industry. GenAI is considered the new Information technology revolution, fuelling Digital transformation projects and cloud adoption. Generative AI can play a crucial role in cloud and digital transformation strategies by helping businesses automate and streamline their operations, enabling them to achieve greater agility, scalability, and cost savings. You only have to see the “AI bump” in the US stock market and the increasing discussion of AI and regulation to see that in effect. Over 80% of C suite executives are considering or actively implementing Gen AI technology today.

2. How does IBM address the evolving demand for generative AI while supporting digital transformation efforts?

IBM addresses the evolving demand for generative AI by investing in research and development, forming strategic partnerships, and continuously updating its product offerings. You can see this through our 12-month announcement of the watsonx platform up to now:

- We created a dedicated platform from our extensive AI investments called “watsonx”, to provide generative AI capabilities for business.

- We combine IBM Research and open source developments into enterprise class assets customers can leverage to realise this technology today

- We work with our ecosystem of partners, open source communities, customers and hyperscaler providers to make this open to all, based on our Technology principles of being, Open, Trusted, Targetted and Empowering to our customers:

- Open: It’s based on the best open technologies available

- Trusted: It’s responsible and governed

- Targeted: It’s designed for the enterprise and targeted for business domains

- Empowering: It’s designed for value creators, not just users

3. What impact does generative AI have on government data utilisation and decision-making processes?

Governments are one of the most data-rich entities on the planet, and their focus is to deliver optimal citizen services. Generative AI is an excellent platform to reduce bureaucratisation and increase insight driven decision making for optimal citizen services. Generative AI tools like Watsonx can analyse vast amounts of unstructured data, including government records, public statements, and news feeds, to extract valuable insights and inform decision-making processes.

By processing large datasets quickly and accurately, these tools can help governments identify trends, patterns, and correlations that might otherwise go unnoticed. Additionally, generative AI can generate tailored reports and visualisations to communicate complex ideas effectively, aiding in transparency and accountability. However, it is important to consider ethical concerns and ensure that the use of generative AI aligns with relevant laws and regulations governing data privacy and security.

4. How does IBM ensure the ethical use of generative AI in its solutions, especially concerning data privacy and security?

IBM ensures the ethical use of generative AI in its solutions by focusing on four main pillars: Culture, Technology, Governance, and Privacy. Within these pillars, IBM promotes a strong emphasis on data privacy and security. This includes implementing robust data governance practices, ensuring continuous model tuning and improvement, addressing legal and ethical considerations, incorporating human oversight, maintaining model transparency, and complying with relevant data protection regulations. By addressing these aspects, IBM strives to build trust in AI while protecting user data and upholding data privacy rights.

Businesses need AI that is accurate, scalable, and adaptable.

- Businesses demand accurate results they can trust.

- Organisations want to build and scale AI quickly and across clouds.

- Businesses want to build AI based on models that can easily be adapted to new scenarios and use cases.

IBM’s approach to AI is based on four core beliefs:

- Open: It’s based on the best open technologies available

- Trusted: It’s responsible and governed

- Targeted: It’s designed for the enterprise and targeted for business domains

- Empowering: It’s designed for value creators, not just users

5. Looking ahead, what emerging trends do you foresee in generative AI?

Looking ahead, I foresee several emerging trends in generative AI, including increased adoption in various industries, further development of explainable AI, and the integration of generative AI with other cutting-edge technologies such as quantum computing and edge computing. Additionally, I anticipate the emergence of new ethical guidelines and regulations surrounding the use of generative AI.

The latest advancements in generative AI have organisations waking up to the full potential of AI.

- AI is having a “Netscape moment.” ChatGPT is doing for AI what Netscape did for the Internet.

- But flashy consumer use cases are not where the real transformational powers lie.

- Foundation models are set to radically change how businesses operate. And quickly.

- In fact, an upcoming study from IBM found 41% of IT professionals say that their company is currently exploring generative AI and 27% are actively using it.

- As the most powerful generative AI is powered by foundation models, we expect this to quickly drive AI adoption.

But businesses are overwhelmed, underprepared, and unsure how to profit from AI.

- Like any technology going through rapid development, AI can be hazardous, especially in business settings.

- Developed in the wrong way, AI can be frivolous or dangerous.

- AI can be just plain wrong, hallucinating or producing toxic results.

- For AI today, there are too few rules of the road.

Businesses need AI that is accurate, scalable, and adaptable.

- Businesses demand accurate results they can trust.

- Organisations want to build and scale AI quickly and across clouds.

Businesses want to build AI based on models that can easily be adapted to new scenarios and use cases.

Justin Lavadia is a content producer and editor at Public Spectrum with a diverse writing background spanning various niches and formats. With a wealth of experience, he brings clarity and concise communication to digital content. His expertise lies in crafting engaging content and delivering impactful narratives that resonate with readers.